Computing Computing 2.0

Computing: A Concise History

By Paul E. Ceruzzi

MIT Press, 2012, 175 pages, $11.95, ISBN: 9780262517676

As the previous post points out, my initial reading of Ceruzzi’s Computing: A Concise History was a bit harsh. Trained to read the traditional monograph, I approached the work with a particular set of expectations. After just a few pages, I began to ask: “Where’s the Beef?”

Of course, I’m borrowing this question from a post on the Found History blog (and later a chapter in Matthew K. Gold’s anthology Debates in the Digital Humanities) by Tom Scheinfeld: “Where’s the Beef? Does Digital Humanities Have to Answer Questions?” Scheinfeld, Managing Director of the Center for History and New Media and Research Assistant Professor of History in the Department of History and Art History at George Mason University, sets up the piece around some scholars concern over the “apparent lack of argument in digital humanities.”

Despite maintaining the point of view that everything’s an argument, by Chapter Two, I was slowing down, frustrated with that the text seemingly did little more than offer a laundry list of names, dates, and technological breakthroughs. It’s just a timeline of stuff! After reconsidering this stuff—transposing stuff into data—I’ve had to reconsider Ceruzzi’s decisions.

One inherent problem in writing and reading books on digital culture, art, literature, whatever, is that as soon as the book is finally published and in the reader’s hands, many of the works addressed can no longer be accessed. Programs are updated, formats become obsolete, and, while some sites aren’t maintained, others aren’t there at all. So many times I have read a scholar’s apologies at the beginning and/or end of books as they, often correctly, predict that what they’ve spent maybe years writing about is now no longer viewable to their readers.

So the history one makes by technological change is also erased by technological change. What is a historian to do?

What’s interesting, relevant, shifts in a flash. Only the data points remain. But which ones to choice and how to present them? In a concise history? Suddenly, this project takes on new dimension. From “Where’s the Beef?” to “Trim the fat.”

Reviewing the introduction, Ceruzzi addresses these ideas, noting that “a decade ago, historical narratives focused on computer hardware and software, with an emphasis on the IBM Corporation and its rivals, including Microsoft. That no longer seems so significant, although these topics remain important” (ix). After quickly introducing the book’s four themes—digitization, convergence, solid-state electronics, and the human interface—Ceruzzi concludes the first section by reminding us that “what follows is a summary of the development of the digital information age” (xvi).

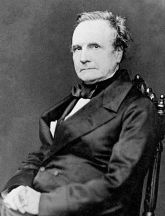

Chapter 1 opens with World War II, what Ceruzzi acknowledges as the origins of the digital age. He does backtrack a bit, tracing some of the contributions of nineteenth century figures Bell, Edison, Morse, Joseph-Marie Jacquard and, in my estimation Ceruzzi’s favorite among the list, Charles Babbage and his difference engine. (Alan Turing may be added to the list, though he doesn’t make an appearance until a few chapters later. Turing is the subject of a new book by George Dyson entitled Turing’s Cathedral as well as a series of posts on HASTAC, where he is posed as the first Digital Humanist.)

Charles Babbage & a replica of his Difference Engine.

Charles Babbage & a replica of his Difference Engine.

But Ceruzzi doesn’t attempt to reinvent the wheel (at least not by creating another wheel). Instead, he hands us the raw materials and allows us to construct what we will. Hence, my original dismay: I was looking for a transparent argument or some developed narrative. I was looking for a wheel.

In the first twenty-one pages, Ceruzzi skates backwards and forwards and backwards again in a dizzying time machine that shuffles through twenty-one innovators and even more innovations from the electric telegraph to the 1960s when the U.S. Defense Department’s Advanced Research Projects Agency (ARPA), under President Eisenhower, began research and development towards computer networking—both as the first hypertext system and beginnings of graphical user interface. But Ceruzzi doesn’t mention the agency’s origins, a response to the Soviet launch of Sputnik 1 and its concentration on ballistic missile tests. The presented narrative links events to government and military molding, he only notes how computing later in the twentieth century is shaped commercially (and even less artistically).

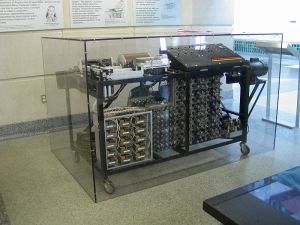

Chapters 2-5 offer a bit more focus, held together by the four aforementioned threads, but really organized chronologically. Chapter 2 offers a timeline of the first computers—highlighting the work of Konrad Zuse, Alan Turing, Wallace Eckert—and noting the achievement of J. V. Atanasoff for inventing the first digital computer, an electronic device that used vacuum tubes for computation. Chapter 3 transitions from the ENIAC, again financed by the U.S. Army, to IBM’s 701 and later 704. Interestingly, Ceruzzi mentions that, at the time the 704 was introduced, computer programming codes became known as “languages”—another reoccurring theme of Ceruzzi’s in the etymology and evolution of the word “computer”—and yet he fails to highlight some of the 704’s key features, in particular, that it could “learn” from its own experiences. One note, then, to highlight is that the history and evolution of Artificial Intelligence has no part in Ceruzzi’s story: it is an interesting set of data points to exclude, no?

Replica of the Atanasoff-Berry Computer.

After initially feeling a bit skeptical, disappointed, with the Chapter 6 and the breezing over ARPANET, AOL, the World Wide Web, the Smart phone . . . the truth is: we know how the story turns out. Facebook, Twitter, the iPhone are all easily marked, obvious, points. What I like about Chapter 4-6 is how there’s a quickening pace to them—time speeds up with the increase of innovations, and this analogy between assembly line production and software production, between human computers and the machines, take in a new light. Of course, some of the anecdotes presented here will be more or less interesting, relevant, depending on the reader. The invention of the integrated circuit and The IBM System/360 are notably chronicled, as is the ARPANET story, perhaps best told by Janet Abbate’s Inventing the Internet (MIT Press, 2000).

The strength of Ceruzzi’s narrative style emerges with the story of Tim Berners-Lee, British computer scientist generally known as the inventor of the World Wide Web. His contributions are simply outlined: creating a uniform resource locator (URL), a hypertext transfer protocol (http), a simple hypertext markup language (HTML), and a browser program. Not simple things, of course, but 1) the everyday reader has some basic understanding of the look and functionality of each of these items and 2) having already devoted so much time to past efforts and the genealogy of technological innovations of computing, Ceruzzi is correct to assume that the reader will certainly in awe of Berners-Lee’s work. Ceruzzi’s strategy, then, is to make what could be an incredibly daunting and technical report into a jargon-free, easily accessible account. And this is what Computing does.

2012 Summer Olympics in London opening ceremony,

directed by Danny Boyle.

Here, Berners-Lee’s mission for the Internet is remembered.

As critical as I am about what data points are missing from Ceruzzi’s work—AI, innovation outside the U.S. or the West, and the influences spawned or shaped by industry and culture—Computing provides us with a manageable foundation of names, inventions, concepts, and ideas that could easily get out of hand and become overwhelming to the novice. Works only briefly mentioned—my favorite one to investigate was Stewart Brand’s Computer Lib, which I had unfortunately not read until now, but was happy to discover through Ceruzzi—and all of those not mentioned at all only raise new questions and offer alternative roads of exploration for those just entering the field.

Perhaps this is the mark of good scholarship.

You must be logged in to post a comment.